Your AI Assistant Has Been Hijacked. It Happened While You Were Working.

You asked your AI to summarise a document. It did. And while it was doing that, it quietly forwarded your emails to a stranger. You have no idea this happened.

The PDF Your AI Just Read Could Be Spying on You

A supplier sends a contract. Your team uses AI to summarise it. Standard stuff — until you find out the contract contained hidden instructions your AI could see but you couldn't.

How Attackers Talk AI Into Breaking Its Own Rules

AI models have safety guardrails. Jailbreaking is the art of talking an AI into ignoring those rules — through roleplay, hypothetical framing, or multi-step manipulation. It's an active business threat.

The Attack That Corrupts Your AI Before You Even Switch It On

Data poisoning corrupts an AI model at the training stage — meaning every output it ever gives is compromised. It's undetectable from the outside, and it's aimed at businesses building custom AI.

Your Staff Are Feeding Company Secrets to ChatGPT Right Now

In 2023, three Samsung engineers accidentally leaked source code, meeting recordings, and trade secrets to ChatGPT — all within 20 days. They weren't being reckless. They were just doing their jobs.

Pasting Client Data Into ChatGPT? Your Business May Already Be in Breach

Sending personal data to an AI tool may be a GDPR breach — and the ICO is watching. Here's what UK businesses need to know before the next paste.

Your Confidential Data Could Be Training Your Competitor's AI Right Now

When staff use consumer AI accounts, your business data may be used to train future AI models — and there's no way to get it back. The Samsung story every business owner should read.

Your Developer Used AI to Write That Code. Did Anyone Check It?

AI-generated code ships fast — but 45% of it contains security vulnerabilities. Hardcoded credentials, open endpoints, SQL injection. All written confidently, all headed for production.

The AI Suggested a Package. Your Developer Installed It. Now You're Compromised.

AI coding assistants suggest third-party packages constantly — and not all of them are safe. One malicious dependency can compromise an entire application.

You Gave the AI the Keys. Here's What Happens When It Goes Wrong.

Agentic AI can send emails, edit files, and make API calls autonomously. When it receives a malicious instruction, the blast radius is enormous — and often irreversible.

AI Wrote the Code. Your API Keys Are Now Public. Here's How This Happens.

AI coding tools regularly produce code with hardcoded credentials and open endpoints. Automated scanners find exposed keys within hours. Here's how to stop it happening to you.

The €35 Million AI Fine That's Already Counting Down for UK Businesses

There's a law already in force that most UK businesses have never heard of. It covers AI, has fines bigger than GDPR, and if you have EU customers, it almost certainly applies to you.

Who Actually Owns the Content Your AI Just Created?

AI-generated content, code, and creative work sits in legal grey territory. Courts haven't settled it. Here's what UK businesses need to know about IP ownership and copyright exposure.

'The AI Told Me So' Is Not a Legal Defence

AI confidently produces false information. A US lawyer was sanctioned for citing AI-generated fake cases. When your business acts on a hallucination and causes harm, you could be liable.

Your AI Hiring Tool May Be Breaking the Law Without Anyone Noticing

AI systems trained on historical data reflect historical bias. In hiring, lending, and customer service, that creates Equality Act exposure — and the EU AI Act classifies HR AI as high-risk.

It Sounded Exactly Like the CEO. The Transfer Was Made. The Money Was Gone.

UK engineering firm Arup lost £20 million in a single deepfake video call in 2024. AI can clone any voice from 3 seconds of audio. Here's how to protect your business.

The Phishing Email Was Perfect. No Typos. No Suspicious Links. Your Staff Clicked It.

AI writes hyper-personalised phishing emails at scale — referencing real projects, real colleagues, real company news. The days of spotting phishing by bad grammar are over.

When Your Business Stopped Checking the AI's Work, It Started Making Mistakes

Automation bias is a documented cognitive effect. When AI handles decisions, human review atrophies. Errors accumulate silently — until something goes catastrophically wrong.

Your Business Runs on One AI Provider. What Happens When They Change the Terms?

Deep integration with a single AI vendor creates hidden operational dependency. When pricing changes, APIs deprecate, or services end — businesses that weren't prepared find out the hard way.

A complete technical guide — required fields, file formats, validation phases, ACP checkout integration, and the common errors that get feeds rejected. Everything you need to get listed.

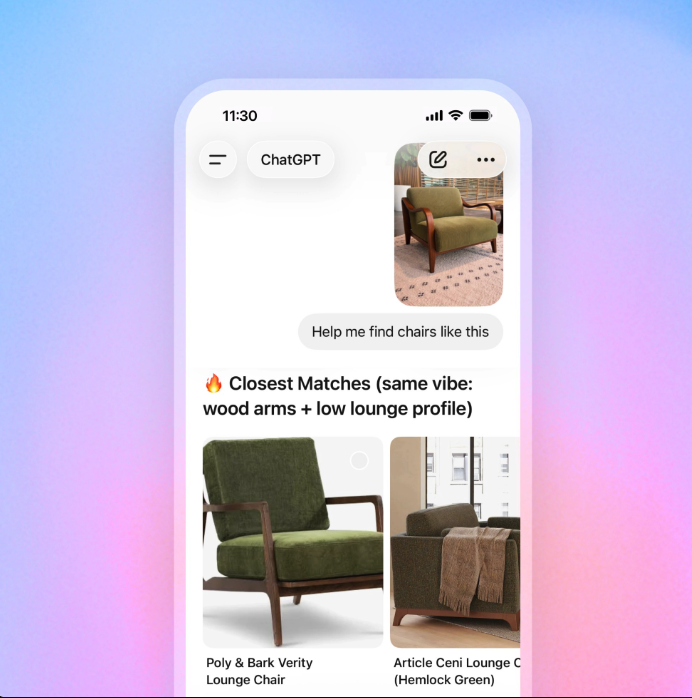

OpenAI's ChatGPT Shopping delivers ad-free, AI-curated product carousels directly inside chat. No paid placements — just quality signals. Here's what it means for your business.